A palette makes it easy for painters to arrange and mix paints of different colors as they create art on the canvas before them. Having a similar tool that could allow AI to jointly learn from diverse data sources such as those for conversations, narratives, images, and knowledge could open doors for researchers and scientists to develop AI systems capable of more general intelligence.

A palette allows a painter to arrange and mix paints of different colors. SpaceFusion seeks to help AI scientists do similar things for different models trained on different datasets.

For deep learning models today, datasets are usually represented by vectors in different latent spaces using different neural networks. In the paper "Jointly Optimizing Diversity and Relevance in Neural Response Generation," my co-authors and I propose SpaceFusion, a learning paradigm to align these different latent spaces—arrange and mix them smoothly like the paint on a palette—so AI can leverage the patterns and knowledge embedded in each of them. This work, which we’re presenting at the 2019 Annual Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT), is part of the Data-Driven Conversation project, and an implementation of it is available on GitHub.

As a first attempt, we applied this technique to neural conversational AI. In our setup, a neural model is expected to generate relevant and interesting responses given a conversation history, or context. While promising advances in neural conversation models have been made, these models tend to play it safe, producing generic and dull responses. Approaches have been developed to diversify these responses and better capture the color of human conversation, but oftentimes, there is a tradeoff, with relevancy declining.

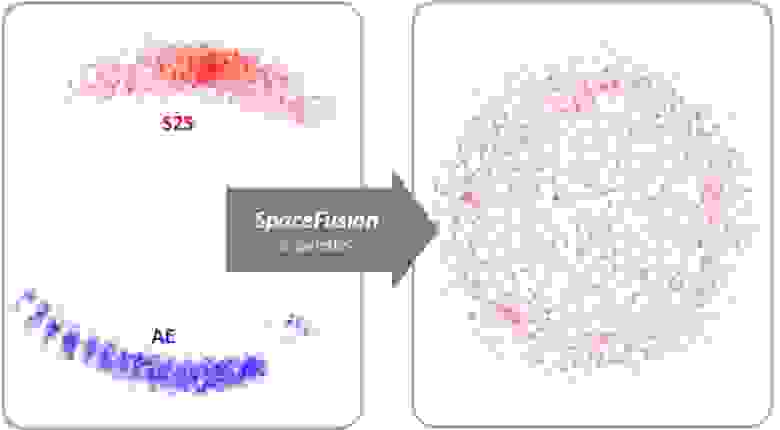

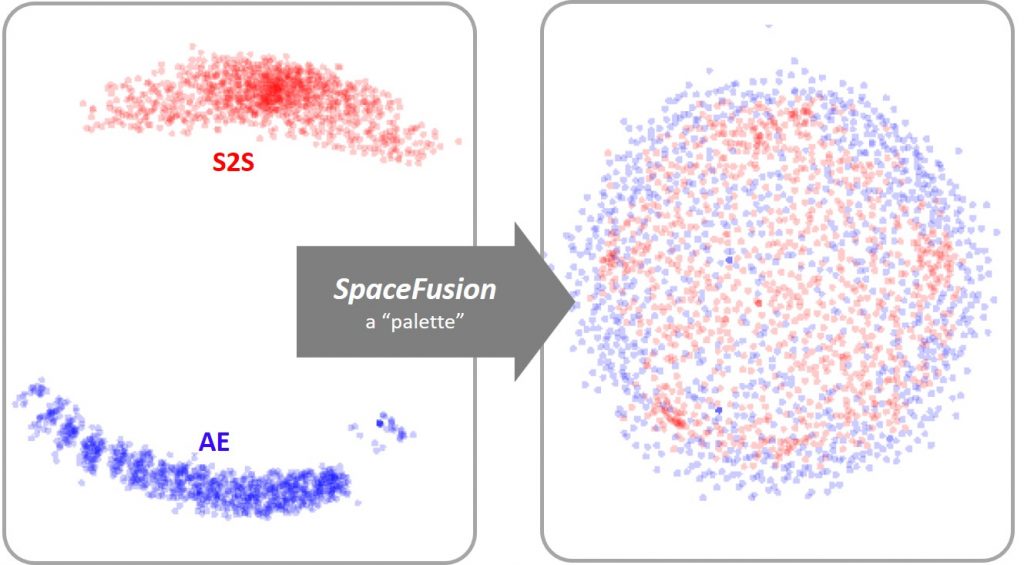

Figure 1: Like a palette allows for the easy combination of paints, SpaceFusion aligns, or mixes, the latent spaces learned from a sequence-to-sequence (S2S, red dots) model and an autoencoder (AE, blue dots) to jointly utilize the two models more efficiently.

SpaceFusion tackles this problem by aligning the latent spaces learned from two models (Figure 1):

The jointly learned model can utilize the strengths of both models and arrange data points in a more structured way.

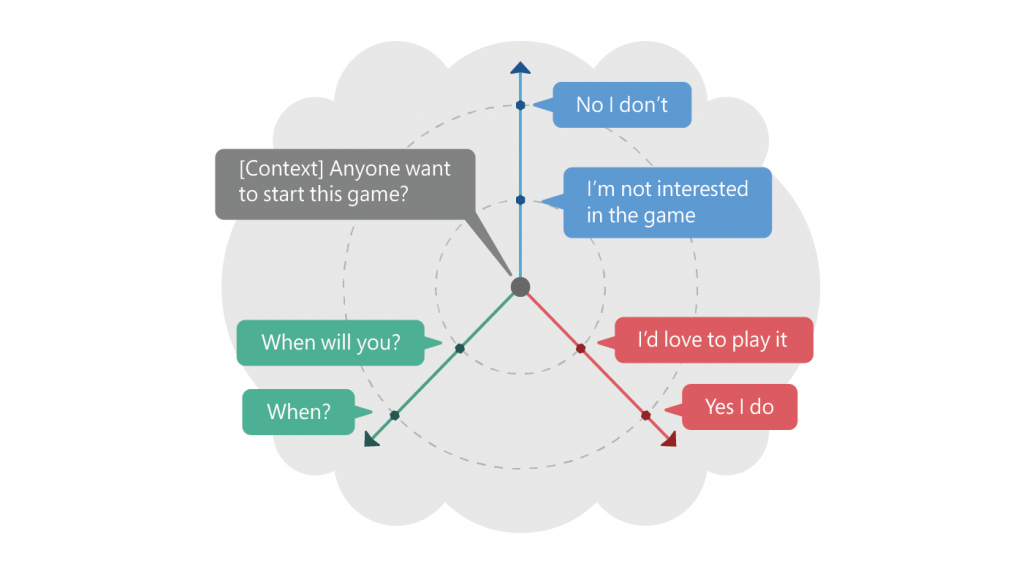

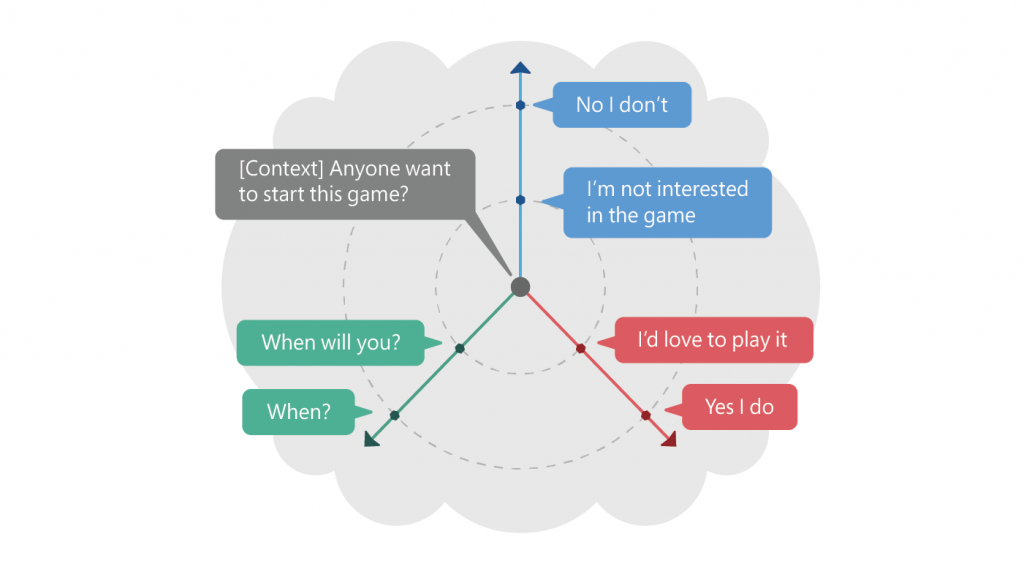

Figure 2: The above illustrates one context and its multiple responses in the latent space induced by SpaceFusion. Distance and direction from the predicted response vector given the context roughly match the relevance and diversity, respectively.

For example, as illustrated in Figure 2, given a context—in this case, «Anyone want to start this game?»—the positive responses «I’d love to play it» and «Yes, I do» are arranged along the same direction. The negative ones—«I’m not interested in the game» and «No, I don’t»—are mapped on a line in another direction. Diversity in responses is achieved by exploring the latent space along different directions. Furthermore, the distance in the latent space corresponds to the relevancy. Responses farther away from the context—«Yes, I do» and «No, I don’t»—are usually generic, while those closer are more relevant to the specific context: «I’m not interested in the game» and «When will you?»

SpaceFusion disentangles the criteria of relevancy and diversity and represents them in two independent dimensions—direction and distance—making it easier to jointly optimize both. Our empirical experiments and human evaluation have shown that SpaceFusion performs better in these two criteria compared to competitive baselines.

So, how exactly does SpaceFusion align different latent spaces?

The idea is quite intuitive: For each pair of points from two different latent spaces, we first minimize their distance in the shared latent space and then encourage a smooth transition between them. This is done by adding two novel regularization terms—distance term and smoothness term—to the objective function.

Taking conversation as the example, the distance term measures the Euclidean distance between a point from the S2S latent space, which is mapped from the context and represents the predicted response, and the points from the AE latent space, which correspond to its target responses. Minimizing such distance encourages the S2S model to map the context to a point close to and surrounded by its responses in the shared latent space, as illustrated by Figure 2.

The smoothness term measures the likelihood of generating the target response from a random interpolation between the point mapped from the context and the one mapped from the response. By maximizing this likelihood, we encourage a smooth transition of the meaning of the generated responses as we move away from the context. This allows us to explore the neighborhood of the prediction point made by the S2S and thus generate diverse responses that are relevant to the context.

With these two novel regularizations added in the objective function, we put the distance and smoothness constraints on the learning of latent space, so the training will not only focus on the performance on each latent space, but also try to align them together by adding these desired structures. Our work focused on conversational models, but we expect that SpaceFusion can align the latent spaces learned by other models trained on different datasets. This makes it possible to bridge different abilities and knowledge domains learned by each specific AI system and is a baby step toward a more general intelligence.

A palette allows a painter to arrange and mix paints of different colors. SpaceFusion seeks to help AI scientists do similar things for different models trained on different datasets.

For deep learning models today, datasets are usually represented by vectors in different latent spaces using different neural networks. In the paper "Jointly Optimizing Diversity and Relevance in Neural Response Generation," my co-authors and I propose SpaceFusion, a learning paradigm to align these different latent spaces—arrange and mix them smoothly like the paint on a palette—so AI can leverage the patterns and knowledge embedded in each of them. This work, which we’re presenting at the 2019 Annual Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT), is part of the Data-Driven Conversation project, and an implementation of it is available on GitHub.

Capturing the color of human conversation

As a first attempt, we applied this technique to neural conversational AI. In our setup, a neural model is expected to generate relevant and interesting responses given a conversation history, or context. While promising advances in neural conversation models have been made, these models tend to play it safe, producing generic and dull responses. Approaches have been developed to diversify these responses and better capture the color of human conversation, but oftentimes, there is a tradeoff, with relevancy declining.

Figure 1: Like a palette allows for the easy combination of paints, SpaceFusion aligns, or mixes, the latent spaces learned from a sequence-to-sequence (S2S, red dots) model and an autoencoder (AE, blue dots) to jointly utilize the two models more efficiently.

SpaceFusion tackles this problem by aligning the latent spaces learned from two models (Figure 1):

- a sequence-to-sequence (S2S) model, which aims to produce relevant responses, but may lack diversity; and

- an autoencoder (AE) model, which is capable of representing diverse responses, but doesn’t capture their relation to the conversation.

The jointly learned model can utilize the strengths of both models and arrange data points in a more structured way.

Figure 2: The above illustrates one context and its multiple responses in the latent space induced by SpaceFusion. Distance and direction from the predicted response vector given the context roughly match the relevance and diversity, respectively.

For example, as illustrated in Figure 2, given a context—in this case, «Anyone want to start this game?»—the positive responses «I’d love to play it» and «Yes, I do» are arranged along the same direction. The negative ones—«I’m not interested in the game» and «No, I don’t»—are mapped on a line in another direction. Diversity in responses is achieved by exploring the latent space along different directions. Furthermore, the distance in the latent space corresponds to the relevancy. Responses farther away from the context—«Yes, I do» and «No, I don’t»—are usually generic, while those closer are more relevant to the specific context: «I’m not interested in the game» and «When will you?»

SpaceFusion disentangles the criteria of relevancy and diversity and represents them in two independent dimensions—direction and distance—making it easier to jointly optimize both. Our empirical experiments and human evaluation have shown that SpaceFusion performs better in these two criteria compared to competitive baselines.

Learning a shared latent space

So, how exactly does SpaceFusion align different latent spaces?

The idea is quite intuitive: For each pair of points from two different latent spaces, we first minimize their distance in the shared latent space and then encourage a smooth transition between them. This is done by adding two novel regularization terms—distance term and smoothness term—to the objective function.

Taking conversation as the example, the distance term measures the Euclidean distance between a point from the S2S latent space, which is mapped from the context and represents the predicted response, and the points from the AE latent space, which correspond to its target responses. Minimizing such distance encourages the S2S model to map the context to a point close to and surrounded by its responses in the shared latent space, as illustrated by Figure 2.

The smoothness term measures the likelihood of generating the target response from a random interpolation between the point mapped from the context and the one mapped from the response. By maximizing this likelihood, we encourage a smooth transition of the meaning of the generated responses as we move away from the context. This allows us to explore the neighborhood of the prediction point made by the S2S and thus generate diverse responses that are relevant to the context.

With these two novel regularizations added in the objective function, we put the distance and smoothness constraints on the learning of latent space, so the training will not only focus on the performance on each latent space, but also try to align them together by adding these desired structures. Our work focused on conversational models, but we expect that SpaceFusion can align the latent spaces learned by other models trained on different datasets. This makes it possible to bridge different abilities and knowledge domains learned by each specific AI system and is a baby step toward a more general intelligence.